|

Establish relationships between

probability,

information &

Coding,

hence why minimum message length

(MML)

is a good idea. Two part message (H)+(D|H), complexity of the model, H, plus data, D|H. |

#1: Thursday 20 July 2000, S15, 3pm-5pm (LA)

Admin': Assessment, etc..

Examples on fair and biased coins, dice, Murli's coffee hypothesis.

Elementary probability, information,

entropy, K-L distance.

#2: Thursday 27 July 2000, S15, 3pm-5pm (LA)

K-L distance examples on discrete distributions.

Coding,

properties that codes can have, prefix property,

codes and entropy, Kraft inequality,

Shannon's communication theory (hence min message length),

Huffman code algorithm,

Arithmetic Coding basic idea and properties.

[Prac' #1]

#3: Thursday 3 August 2000, S15, 3pm-5pm (LA)

Inference,

model class, model, parameter estimation - really the same thing.

|

Fisher

information and its relevance to model complexity,

two-part messages etc. Building blocks: Finite discrete, infinite discrete, and continuous distributions. |

#4: Thursday 10 August 2000, S15, 3pm-5pm (LA)

Fisher

information and a general form for

message length and accuracy of parameter estimate.

Multiple parameters, Fisher information matrix.

3-state and multi-state distributions.

Codes / distributions for

integers

- an infinite data space.

#5: Thursday 17 August 2000, S15, 3pm-5pm (DLD)

MsgLen using Fisher information -

Bionomial distribution, MML estimator.

Poisson distribution, MML estimator.

Normal (Gaussian) distribution.

#6: Thursday 24 August 2000, S15, 3pm-5pm (DLD)

Mixture modelling

(aka clustering, numerical taxonomy, unsupervised classification).

Normal

(Gaussian) distribution,

MML estimator via Fisher information.

|

The payoff:

Higher

level models for interesting problems. - Mixture modelling, - Decision (Classification) trees, - Curve & polygon fitting, - Sequence alignment, - Compression & pattern discovery, - Combined |

#7: Thursday 31 August 2000, S15, 3pm-5pm

(LA. NB. DLD AWAY AT a CONF)

Structured

(higher level, compound) hypotheses.

Mixture modelling (clustering), nuisance parameters, partial assignment.

Polygon fitting (and related problems, e.g. segmentation, curve fitting).

Sequences: Intro' to edit distance, and similar, and

applications.

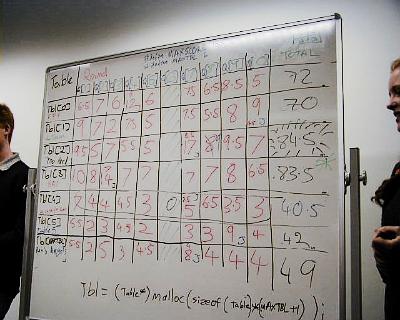

5/9/2000: Team `Too Hard' (mostly MML) wins the

Computer Science Students Club Trivia Night.

Bayesian A.I. comes second.

|

#8: Thursday 7 September 2000, S15, 3pm-5pm (DLD)

More on mixture modelling.

(NB. We are a bit out-of-sync', and destined to remain so,

because dld is away next week also.)

#9: Thursday 14 September 2000, S15, 3pm-5pm (LA)

Return to sequence analysis, alignment, comparison etc.

#10: Thursday 21 September 2000, S15, 3pm-5pm (DLD)

Decision Trees

(more properly Classification Trees).

| NB. 25 - 29 September, semester break |

#11: Thursday 5 October 2000, S15, 3pm-5pm (DLD)

Discussion of prac #2.

Decision Trees:

K-L distance between a (function corresponding to a decision) tree and

an inferred decision tree.

Splitting and continuous valued attributes

#12: Thursday 12 October 2000, S15, 3pm-5pm (DLD)

#13: Thursday 19 October 2000, S15, 3pm-5pm (revision?)