| A System Model for a SCSI Disk I/O Channel |

| Originally published August, 1995 |

| by

Carlo Kopp |

| © 1995, 2005 Carlo Kopp |

|

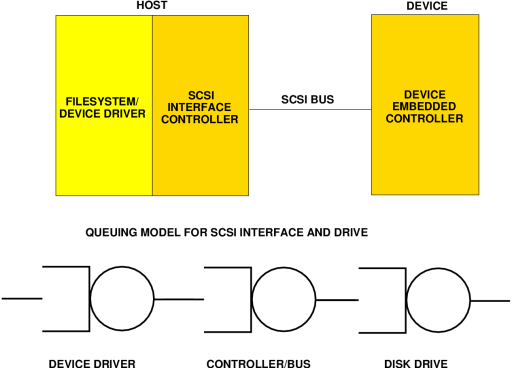

The SCSI bus is, even at moderate clock speeds, very much faster than any disk drive. This is best illustrated by example. Consider a block read situation, resulting say from a page fault, where the block is not held in the Unix buffer cache. In this situation, the filesystem will be called upon to determine the block address required. Assuming, perhaps optimistically, that this information does not require the fetching of the inode, the filesystem will pass the read command to the SCSI device driver. The device driver will place the request in its queue, and again perhaps optimistically, we will assume the queue is empty and the request is passed to the disk. All of this has transpired in a few tens of microseconds, on a good machine. Assuming again, optimistically, that the SCSI bus is idle and the drive also idle, the host will go through an arbitration cycle with the drive, and the drive will fetch the block address. From this point, the the drive must fetch the block and return it to the host. If a cache miss is experienced, the drive must read the block from its surfaces, which as discussed above, usually takes quite a few milliseconds. Once the block is found, the drive arbitrates for the bus, and then sends the block back to the host. The device driver will then use a DMA (Direct Memory Access) controller to load the block into memory. Once this has occurred, various items of OS housekeeping will be carried out, to complete the I/O operation. This picture intentionally omits the gory details, so as to illustrate the path which the block must traverse from the surfaces of the disk drive into the memory of the host. What is important from a performance perspective is the amount of time spent doing each of these tasks. A typical time budget (assuming a contemporary machine), which illustrates the orders of magnitude involved, is as follows:

As is clearly evident, the dominant factor here is the disk drive access time, which is about one thousand times greater than the time required, in the best case, to execute the various operating system activities required. This is a contrived model, where the worst case drive time is compared against a best case operating system time. However, even should the operating system take more than a millisecond to do its work, it is still 10 times faster than the disc drive's physical access cycle. It is the author's view that much of the performance gain to be realised by using a fast (10 MHz) SCSI bus against a slow (5 MHz) SCSI bus is vastly overstated by our brethren in the sales community. Only where a very high disk drive cache hit rate is achieved consistently, will the faster bus provide a notable improvement in I/O throughput performance. It is only under such conditions that the transfer time of the data block across the bus, measurable in microseconds, is significant in comparison with the other times involved in the process. Where the hit rate is mediocre, throughput performance will be limited by achieved drive access times.

A more effective strategy for dealing with this problem is to split the drives across multiple controllers, as this can most effectively reduce the probability that the bus will transiently saturate. It also yields another benefit, in that the queuing delays associated with the device driver and SCSI controller are reduced. Given the asymptotic behaviour of queuing systems, the gain can be better than one might expect. The worth of using multiple SCSI controllers should not be underestimated, particularly where the device driver and host controller chip are not the most efficient, or the host CPU is lacking performance. The result of poor interface performance will be the queuing up of outstanding I/O requests to the interface. Should the disk caches be performing particularly well, the bottleneck to throughput could well be found in the interface. Using multiple interfaces cuts the average queue lengths and in turn the delay in servicing the I/O request. One SCSI-2 feature which can provide for a useful performance gain is tagged queuing. Tagged queuing allows a drive to queue up multiple SCSI commands received from the host. This can provide a significant performance gain as the drive logic can then optimise the drive's seek pattern against the block addresses in the queue. In this fashion the drive can get the best possible access time for the set of blocks in the command queue, regardless of the filesystem's implicit assumptions about the drive. Tagged queuing must also be supported by the device driver and SCSI controllers on the host, which must know how to generate the appropriate SCSI messages and manage the state of the driver. If the drive, controller or OS do not support the feature, it is unusable. The importance of tagged queuing is in that it attacks the most difficult component of the performance equation, the drive's physical access time, and it allows the drive manufacturer to use an optimal strategy for its product. Alas it is a sad part of our industry mythology that SCSI clock speed is prized more highly in the marketplace than physical access time, caching strategy used and support for tagged queuing. However it is fair to say that the plague of non-Unix machines with primitive filesystems has been a major contributor to this situation, as such systems are unable to exploit the latter features properly. Summary The performance of SCSI disc drives is highly sensitive to the cache hit rate achievable, and where the cache misses, upon the conventional performance metrics of the disc drive. What this means in practice is that a systems integrator should focus on matching the filesystem in use with the caching strategy employed on the drive, and selecting drives with the best possible physical access performance. Should the device driver used support tagged queuing, only drives which provide tagged queuing should be used. As most modern drives now employ fast 10 MHz interfaces the issue of whether to use fast SCSI or not no longer arises. Where more than three drives are used on a chain, consideration should be given to splitting the drives across multiple controllers. In perspective it is fair to say that caution is always warranted when selecting disc drives for your system, and it never hurts not to be shy and ask the vendor for more technical detail on some of the issues discussed here. The results are by all means well worth the effort. |

| $Revision: 1.1 $ |

| Last Updated: Sun Apr 24 11:22:45 GMT 2005 |

| Artwork and text © 2005 Carlo Kopp |